Senior Software Engineer Rajesh Kesavalalji on Why the Distance Between a Working Demo and a Deployable System Is Wider Than Most Engineers Realize

- 1 The Illusion of Completion

- 2 Building for Reality, Not Recognition

- 2.1 When Accuracy Becomes Critical

- 2.1.1 Key Design Principles

- 2.2 Managing Complexity in Distributed Systems

- 2.3 The Challenge of Real-World Systems

- 2.3.1 Why It Worked

- 2.4 When Design Outshines Functionality

- 2.4.1 The Result

- 2.5 The Importance of Deployment

- 2.6 The Role of Explainability in AI Systems

- 2.7 Understanding the Real User

- 2.8 Deployment Empathy: The Missing Ingredient

- 3 Redefining Success in Software Engineering

- 4 Conclusion: Beyond the Demo

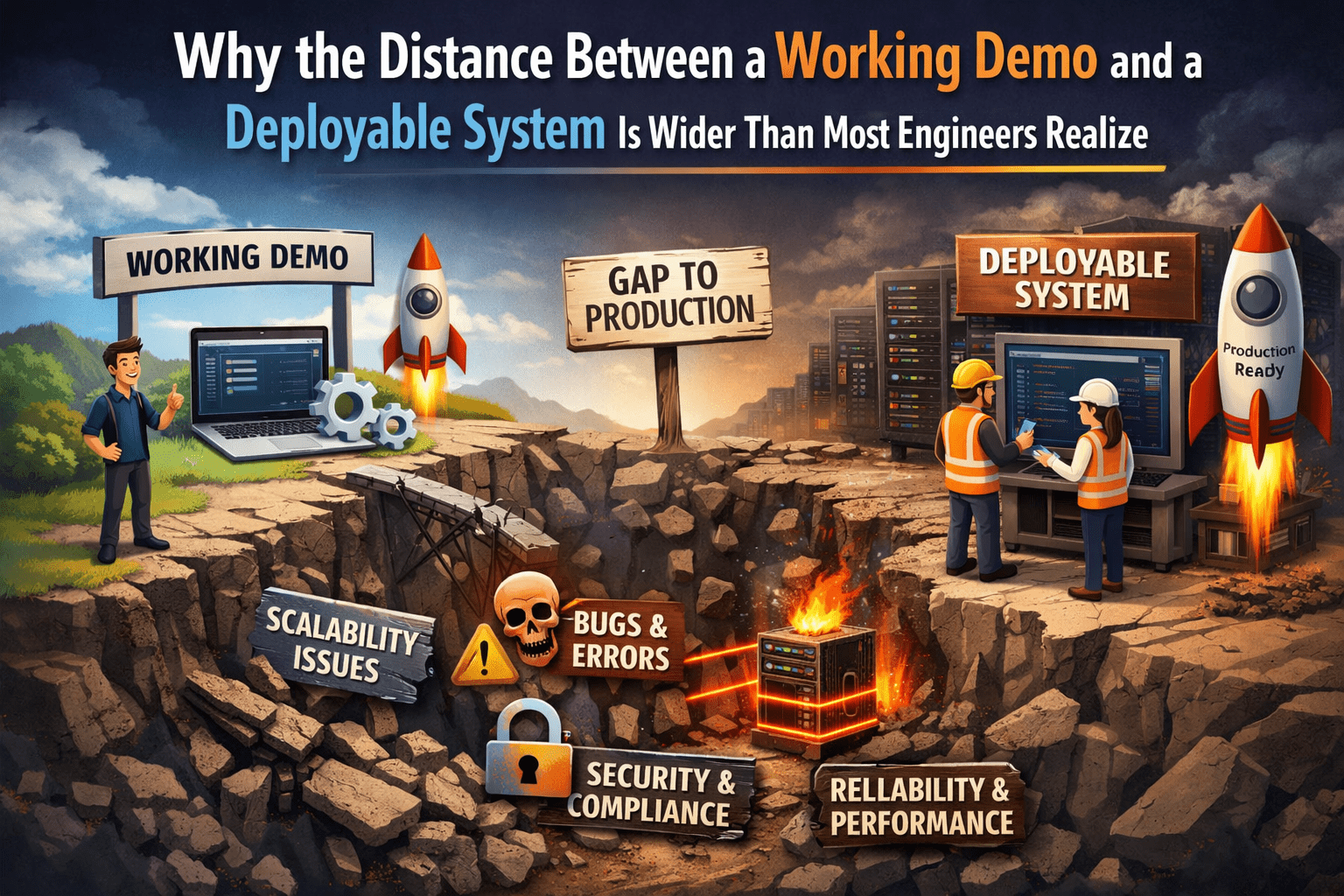

In the fast-moving world of software development, it’s easy to celebrate a working prototype as a finished product. A smooth demo, a clean interface, and a well-written README can give the illusion that a system is ready for real users. But according to seasoned engineers, this assumption is often dangerously misleading.

Senior Software Engineer Rajesh Kesavalalji has spent years working in distributed systems and AI infrastructure, where the difference between “it works” and “it works reliably at scale” can take months—or even years—to bridge. His recent evaluation of open-source projects at a global hackathon revealed a powerful truth: the biggest challenge in engineering is not building something that works, but building something that survives in the real world.

The Illusion of Completion

In many hackathons and early-stage projects, success is measured by functionality. If the code runs and the demo impresses, the project is often considered complete. However, this mindset hides a deeper issue.

There exists a subtle but critical form of technical debt that many teams fail to recognize. It’s not about messy code or missing documentation—it’s about building systems that are optimized for development environments rather than real-world conditions.

These systems are:

- Designed for controlled settings

- Tested on reliable machines

- Presented under ideal conditions

But real users don’t operate in ideal environments. They face unreliable networks, unpredictable inputs, and varying device capabilities. When a system built for a demo meets these challenges, it often breaks.

Rajesh Kesavalalji’s Production Perspective

Rajesh Kesavalalji’s expertise lies in building systems where failure is not an option. His work in distributed architecture has taught him that success is not defined by functionality alone—it is defined by resilience.

When evaluating projects at sudo make world 2026, a 72-hour hackathon focused on social impact, he approached each submission with a production mindset. Instead of asking whether a project worked, he asked whether it could continue working under pressure, scale, and real-world constraints.

What he discovered was a consistent pattern: the strongest teams were not those with the most features, but those with the clearest understanding of their system’s limitations.

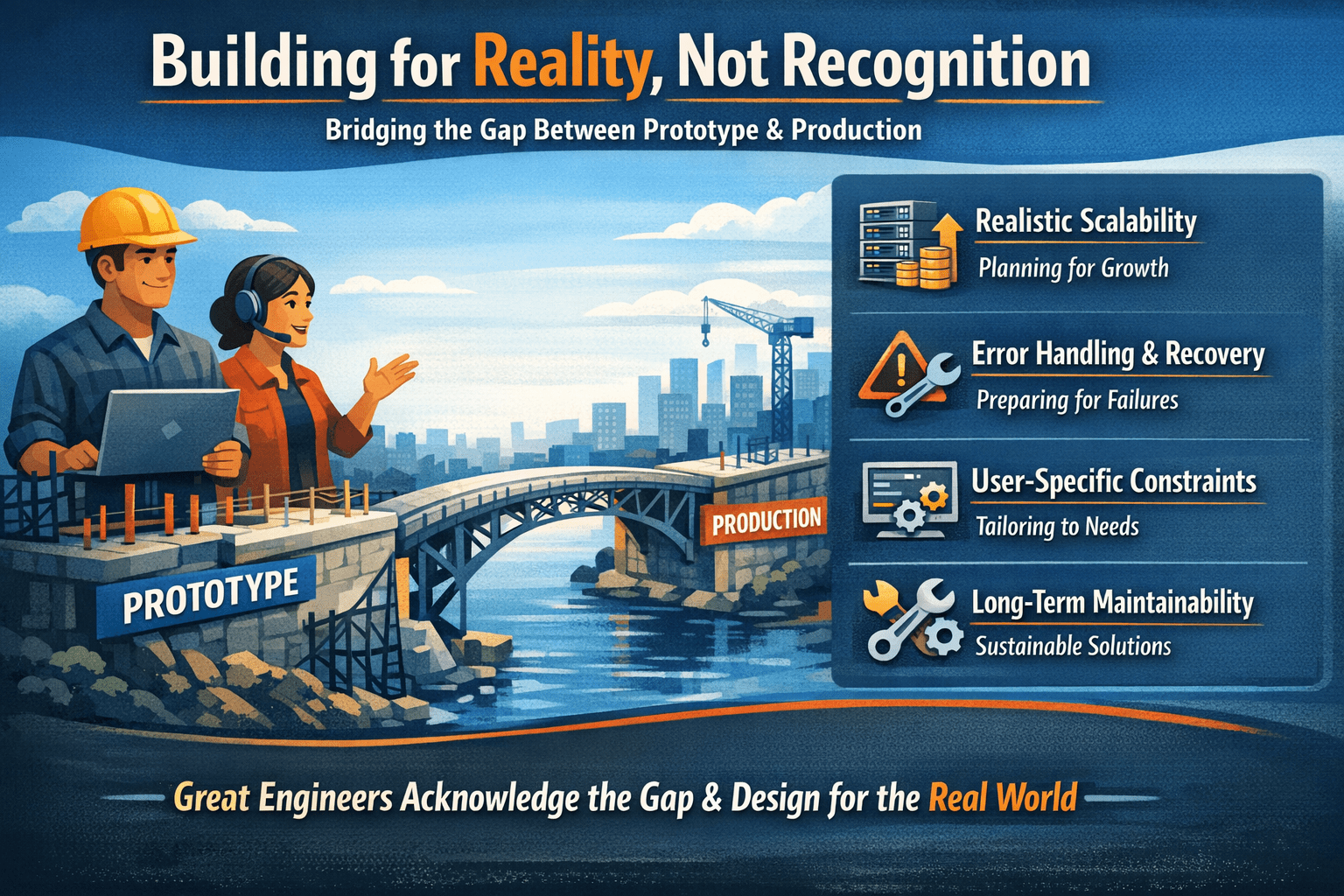

Building for Reality, Not Recognition

One of the most important insights from Kesavalalji’s evaluation was that the best teams did not pretend their projects were finished. Instead, they acknowledged the gap between prototype and production—and designed accordingly.

These teams focused on:

- Realistic scalability

- Error handling and recovery

- User-specific constraints

- Long-term maintainability

This awareness, often overlooked in hackathons, is what separates good engineers from great ones.

When Accuracy Becomes Critical

A standout project in the competition demonstrated how architecture must adapt to the problem it solves. This system was designed to process sensitive information and convert it into legally valid documentation.

In most applications, a lost data packet is a minor inconvenience. Systems can retry or recover. But in this case, losing data could compromise the integrity of the entire process.

Key Design Principles

- Every step in the pipeline tracked the data origin

- Confidence levels were intentionally limited

- Human verification was required

This approach highlights a key lesson: not all systems should prioritize speed or automation. In high-stakes environments, trust and accuracy matter more than efficiency.

Managing Complexity in Distributed Systems

Another project explored the challenge of handling multiple real-time interactions simultaneously. At its core, it was designed to manage conversations across numerous sessions without interference.

This required careful separation between:

- Shared system resources

- Individual session data

If these boundaries were not properly maintained, one failure could cascade across the system.

Smart Architectural Choices

- Session isolation to prevent data leakage

- Lightweight models for faster decision-making

- Retry mechanisms for external service failures

This project demonstrated a crucial engineering principle: scalability is not just about handling more users—it’s about maintaining stability as complexity grows.

The Challenge of Real-World Systems

One of the most difficult types of software to build mirrors real-world processes. Unlike simple applications, these systems must adapt to constantly changing conditions.

A project focused on resource redistribution highlighted this complexity. It had to manage:

- Time-sensitive data

- Geographic constraints

- Quality and compliance factors

Each variable influenced how the system operated, making the architecture significantly more complex.

Why It Worked

The team designed their system to reflect real-world dynamics rather than simplifying them. They accounted for:

- Changing conditions over time

- Multiple user roles

- Different transaction pathways

This approach ensured that the system remained relevant and functional outside of a controlled environment.

When Design Outshines Functionality

Not all projects succeeded equally. One particularly well-designed application offered an intuitive interface for decision-making. It presented users with structured comparisons, risk assessments, and future projections.

While the design was impressive, the underlying system lacked depth. It relied heavily on generalized data rather than user-specific inputs.

The Result

- The outputs felt repetitive

- Recommendations lacked personalization

- Insights were limited in real-world value

This highlights an important lesson: a polished interface cannot compensate for weak data architecture.

The Importance of Deployment

Perhaps the most revealing case involved a technically advanced system that failed to provide a live demonstration. Despite its strong backend, the absence of a working deployment raised serious concerns.

In distributed systems, deployment is more than a final step—it is a validation of the entire architecture.

A system that works locally may fail due to:

- Network latency

- Resource limitations

- Configuration differences

Without proving that the system can run in a real environment, its reliability remains uncertain.

The Role of Explainability in AI Systems

Another noteworthy aspect of the evaluated projects was the integration of explainable AI. Instead of simply providing results, some systems offered insights into how those results were generated.

This is especially important in high-stakes environments where users need to trust the system’s decisions.

By incorporating transparency into the workflow, these projects ensured that users could:

- Understand the reasoning behind outputs

- Validate the system’s accuracy

- Make informed decisions

This approach reflects a growing trend in AI development—moving from black-box models to interpretable systems.

Understanding the Real User

One of Kesavalalji’s most significant observations was that architecture reflects how well engineers understand their users.

In enterprise environments, developers can assume:

- Stable infrastructure

- Reliable connectivity

- Technical support

But in public-facing or social-impact systems, these assumptions do not hold.

Users may face:

- Limited internet access

- Shared devices

- Lack of technical knowledge

Designing for these conditions requires a completely different approach.

Deployment Empathy: The Missing Ingredient

The most successful projects shared a common trait—what Kesavalalji describes as “deployment empathy.”

This means designing systems with a deep understanding of:

- User environments

- Real-world challenges

- Operational constraints

Instead of focusing solely on features, these teams prioritized usability, accessibility, and reliability.

Redefining Success in Software Engineering

One of the biggest misconceptions in software development is equating functionality with success. A system that works in isolation is not necessarily useful.

True success requires:

- Consistent performance

- Real-world usability

- Scalability under pressure

- Resilience to failure

This shift in perspective is essential for building systems that create real impact.

Lessons for Developers

Based on these insights, developers can improve their approach by focusing on the following:

1. Build for Failure

Assume that things will go wrong and design systems that can recover gracefully.

2. Understand Your Users

Know the environment in which your system will operate.

3. Prioritize Architecture

Strong architecture ensures long-term reliability and scalability.

4. Test Beyond the Happy Path

Include edge cases, failures, and stress scenarios in your testing.

5. Validate Through Deployment

A system is only proven when it works in a real environment.

Conclusion: Beyond the Demo

The gap between a working demo and a deployable system is not just a technical challenge—it is a mindset shift. It requires engineers to move beyond short-term success and focus on long-term reliability.

Rajesh Kesavalalji’s insights highlight a critical truth: the real measure of a system is not how well it performs in a demo, but how reliably it serves users in the real world.

As software continues to shape industries and communities, this distinction becomes increasingly important. Developers who embrace this perspective will not only build better systems—they will build systems that truly matter.